Netflix’s Real-Time Graph: A Glimpse into the Future of Personalized Experiences

Netflix is no longer simply a streaming service; its expansion into gaming, live events, and advertising demands a sophisticated understanding of how users interact across its diverse ecosystem. To meet this challenge, Netflix engineers have developed Graph Abstraction, a high-throughput system capable of managing massive graph data in real time. This isn’t just about better recommendations – it’s a foundational shift in how Netflix understands and responds to user behavior.

The Challenge of Siloed Data

Traditionally, Netflix’s microservices architecture, while offering flexibility, created data silos. Video streaming data resided in one place, gaming data in another, and authentication information separately. Connecting these disparate pieces of information to create a unified view of the member experience proved difficult. Graph Abstraction addresses this by providing a centralized platform for representing relationships between users, content, and services.

How Graph Abstraction Works: Speed and Scale

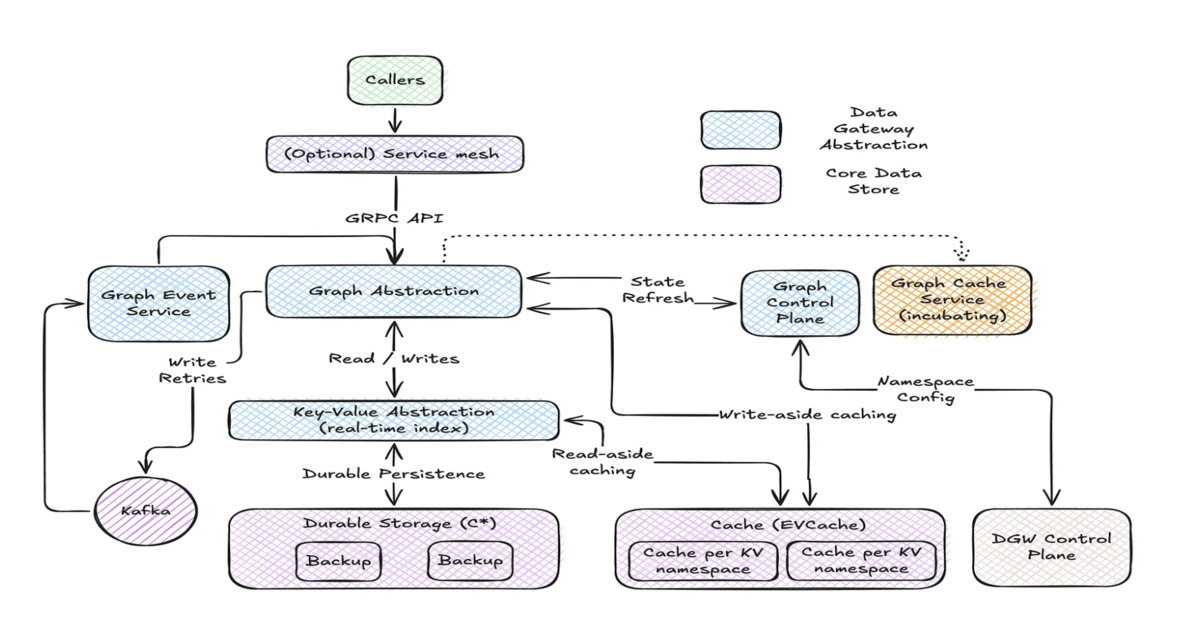

The key to Graph Abstraction’s success lies in its design. It prioritizes speed and scalability, delivering single-digit millisecond latency for simple queries and under 50 milliseconds for more complex two-hop queries. This is achieved through several techniques, including restricting traversal depth, requiring a defined starting node, and leveraging caching strategies like write-aside and read-aside caching. The system stores the latest graph state in a Key Value abstraction and historical changes in a TimeSeries abstraction.

Global availability is ensured through asynchronous replication across regions, balancing latency, availability, and consistency. The platform utilizes a gRPC traversal API inspired by Gremlin, allowing services to chain queries and apply filters.

Beyond Recommendations: Diverse Use Cases

Graph Abstraction powers a variety of internal services. A real-time distributed graph captures interactions across all Netflix services. A social graph enhances Netflix Gaming by modeling user relationships. A service topology graph aids engineers in analyzing dependencies during incidents and identifying root causes. This versatility demonstrates the platform’s potential to support a wide range of applications beyond personalized recommendations.

The Rise of Graph Databases in the Streaming Era

Netflix’s investment in Graph Abstraction reflects a broader trend in the streaming industry. As services compete for user attention, the ability to deliver highly personalized experiences becomes paramount. Graph databases are uniquely suited to this task, enabling companies to model complex relationships and uncover hidden patterns in user behavior. This is particularly crucial as streaming platforms expand into new areas like interactive content and live events.

Future Trends: AI-Powered Graph Analytics

The integration of artificial intelligence (AI) with graph databases is poised to unlock even greater potential. Imagine a system that not only recommends content based on past viewing history but also predicts future preferences based on social connections and emerging trends. AI algorithms can analyze graph data to identify influential users, detect fraudulent activity, and optimize content distribution. The 2026 AI predictions report highlights the require for unified context engines, and Graph Abstraction provides a strong foundation for building such systems.

The Convergence of Real-Time and Historical Data

Netflix’s use of both a Key Value abstraction for current state and a TimeSeries abstraction for historical data is a significant development. This allows for both real-time personalization and long-term trend analysis. Future graph database systems will likely follow this pattern, offering a unified view of both current and historical relationships. This will enable more sophisticated analytics, auditing, and temporal queries.

Pro Tip:

When evaluating graph database solutions, consider the trade-offs between query flexibility and performance. For operational workloads that require high throughput and low latency, a system that prioritizes performance may be more suitable than a traditional graph database with extensive query capabilities.

FAQ

- What is Graph Abstraction? Graph Abstraction is Netflix’s high-throughput system for managing large-scale graph data in real time.

- What are the key benefits of Graph Abstraction? It provides millisecond-level query performance, global availability, and supports diverse use cases across Netflix.

- How does Netflix ensure global availability? Through asynchronous replication of data across regions.

- What types of queries does Graph Abstraction support? It supports traversals with defined starting nodes and limited depth, optimized for speed and scalability.

Did you know? Netflix’s Graph Abstraction platform manages roughly 650 TB of graph data.

Explore more about Netflix’s engineering innovations on the Netflix Tech Blog. Share your thoughts on the future of graph databases in the comments below!