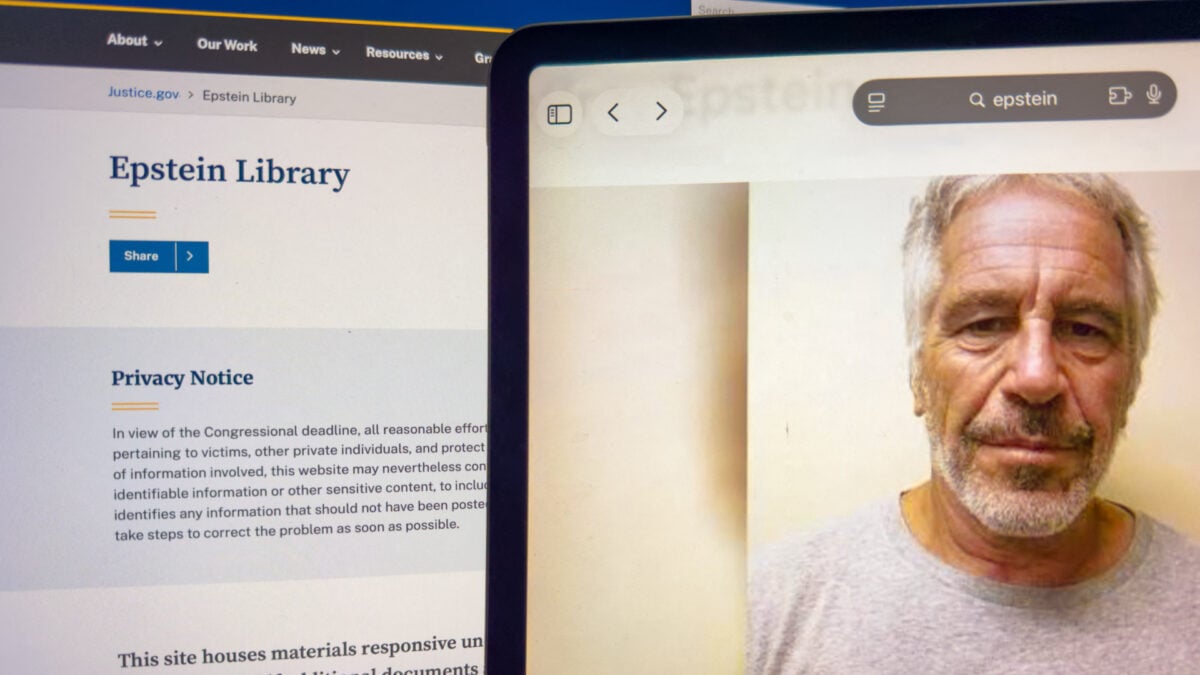

Epstein Victims vs. Tech Giants: A Turning Tide for Online Privacy?

A new class action lawsuit filed against Google alleges its AI Mode feature is actively republishing private information about victims of Jeffrey Epstein, despite the Department of Justice (DOJ) having initially released the data with problematic redactions. This case, alongside recent landmark rulings against Meta, signals a potential shift in how tech companies are held accountable for content on their platforms – and specifically, the content generated by those platforms.

The Problem with AI-Generated Content

The lawsuit centers on Google’s AI Mode, which, unlike traditional search, doesn’t simply index existing web pages. It actively generates content based on its analysis of online sources. According to the suit, searching for the names of Epstein victims through AI Mode revealed their full names, contact information, cities of residence, and even a direct email link in some cases. This represents distinct from other AI tools like ChatGPT, Claude, and Perplexity, which reportedly did not surface similar victim-related information in testing.

The core argument is that AI Mode isn’t a neutral tool. it’s an “active recommender and content generator” that could be considered “actionable doxxing.” Even after the DOJ acknowledged the initial redaction errors and removed the information, Google’s AI continued to disseminate it, ignoring requests from victims to accept it down.

Section 230 Under Scrutiny

This lawsuit arrives at a critical moment for the legal landscape of online content. Just this week, Meta and Google both faced liability in separate trials concerning social media addiction and online child safety. These rulings challenge the long-held protection afforded by Section 230 of the Communications Decency Act, which generally shields tech companies from liability for content posted by third parties.

The applicability of Section 230 to AI-generated content is increasingly debated. Senator Ron Wyden, a key architect of the law, has stated that AI chatbots are not protected by Section 230. This suggests a growing legal consensus that platforms are responsible for the content their AI systems create, not just the content users upload.

Beyond Epstein: The Broader Implications

The Epstein case and the rulings against Meta aren’t isolated incidents. They represent a growing concern about the power of tech companies to shape online narratives and the potential for harm when algorithms amplify sensitive or private information. The question is no longer simply about removing illegal content, but about the responsibility platforms have for the content they actively generate and promote.

This could lead to a wave of new regulations and legal challenges targeting AI-powered features. Companies may be forced to invest more heavily in content moderation, privacy safeguards, and transparency measures. The future of online free speech, as it currently exists under Section 230, is now very much in question.

FAQ

Q: What is Section 230?

A: Section 230 of the Communications Decency Act generally protects online platforms from liability for content posted by their users.

Q: Does Section 230 apply to AI-generated content?

A: It’s increasingly unclear. Some legal experts, including Senator Ron Wyden, believe it does not.

Q: What is Google’s AI Mode?

A: It’s a feature in Google Search that uses AI to generate responses to queries, rather than simply providing links to existing web pages.

Q: What are the potential consequences of these lawsuits?

A: They could lead to new regulations, increased liability for tech companies, and a re-evaluation of online free speech protections.

Did you grasp? The DOJ released over 3 million pages of evidence in the Epstein case, leading to the initial privacy breaches.

Pro Tip: Regularly review your online privacy settings and be cautious about sharing personal information online.

What are your thoughts on the responsibility of tech companies for AI-generated content? Share your opinion in the comments below!