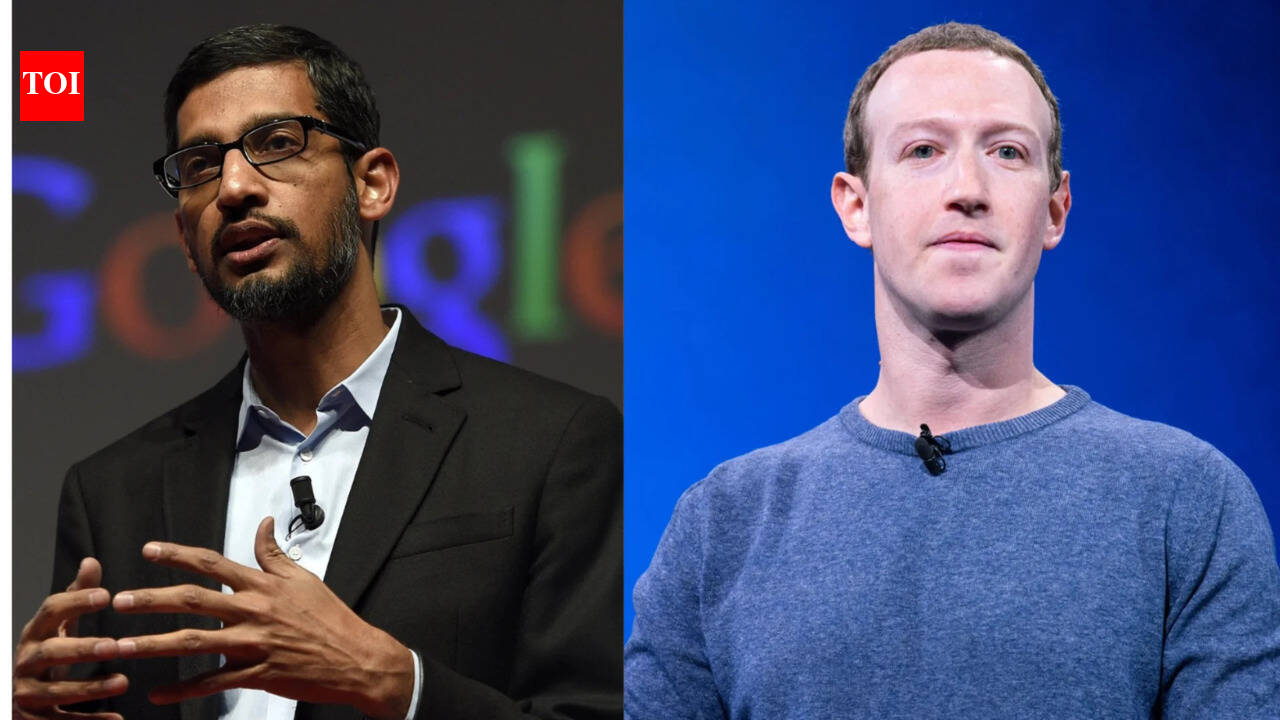

From Cage Fights to Corporate Raids: The Shifting Alliances of Musk and Zuckerberg

The rivalry between Elon Musk and Mark Zuckerberg, once publicly displayed through talk of a literal cage fight, took an unexpected turn in early 2025, according to recently released court documents. These documents reveal a period of collaboration, where Musk sought Zuckerberg’s assistance – and even a potential partnership – in a bid to acquire OpenAI.

A Joint Bid for OpenAI? The Texts Reveal All

On February 3, 2025, a series of texts between Musk and Zuckerberg surfaced as part of Musk’s lawsuit against OpenAI. Zuckerberg offered support regarding Musk’s efforts through the Department of Government Efficiency (DOGE), stating Meta’s teams were “on alert to seize down content doxxing or threatening” those connected to Musk’s perform. He then followed up by asking if Zuckerberg would be “open to the idea of bidding on OpenAI with me and some others?” Zuckerberg responded by suggesting a phone call to discuss the possibility.

Why the Sudden Overture? The Context of 2025

This outreach occurred during a pivotal moment for both tech leaders. Musk, having founded OpenAI as a non-profit, was increasingly critical of its shift towards a for-profit model. He subsequently launched xAI as a direct competitor. Simultaneously, Zuckerberg was publicly discussing concerns about “emasculated” corporate America, as highlighted in a Joe Rogan podcast appearance around the same time. The potential alliance suggests a shared concern about the direction of AI development and a willingness to explore alternative control structures.

The Bid That Wasn’t: Musk’s $97.4 Billion Offer

Musk ultimately pursued a $97.4 billion bid to acquire OpenAI, leading a consortium in an unsolicited offer. Yet, OpenAI CEO Sam Altman rejected the proposal. Notably, despite the initial discussions, Zuckerberg and Meta did not sign on to join Musk’s bid, according to court filings.

The Broader Implications: AI Consolidation and Big Tech Alliances

This episode highlights the complex dynamics at play in the rapidly evolving AI landscape. The willingness of two of the world’s most prominent tech figures to consider a joint acquisition of OpenAI underscores the high stakes involved. It also raises questions about the potential for future alliances and consolidation within the industry. The incident demonstrates that even fierce rivals can find common ground when faced with significant strategic opportunities.

The Role of Government Influence: The DOGE Connection

Zuckerberg’s initial offer of assistance related to Musk’s work with the Department of Government Efficiency (DOGE) is noteworthy. This suggests a level of coordination – or at least a willingness to support – Musk’s government-focused initiatives. The details of DOGE’s activities remain somewhat opaque, but the exchange indicates a potential intersection between Musk’s broader ambitions and Meta’s willingness to provide support.

Future Trends: What So for the AI Landscape

The Musk-Zuckerberg saga offers a glimpse into potential future trends in the AI industry:

Increased M&A Activity

The attempted OpenAI acquisition signals a likely increase in mergers and acquisitions as major tech companies seek to consolidate their positions and gain access to critical AI technologies. Expect to spot further bids and partnerships as the competitive landscape intensifies.

Shifting Alliances

The fluidity of the relationship between Musk and Zuckerberg demonstrates that alliances in the tech world are often temporary and driven by strategic considerations. Companies will likely continue to form and dissolve partnerships as their priorities evolve.

Government Scrutiny and Influence

The involvement of the Department of Government Efficiency highlights the growing role of government in shaping the AI landscape. Expect increased scrutiny and regulation of AI technologies, as well as potential government-backed initiatives to promote innovation.

FAQ

Q: Did Mark Zuckerberg join Elon Musk’s bid for OpenAI?

A: No, despite initial discussions, Zuckerberg and Meta did not sign on to join Musk’s bid.

Q: What is the Department of Government Efficiency (DOGE)?

A: DOGE is a government-focused initiative led by Elon Musk. Details about its specific activities are limited.

Q: When did these texts between Musk and Zuckerberg take place?

A: The texts were sent on February 3, 2025.

Q: What was the value of Musk’s bid for OpenAI?

A: Musk’s bid was for $97.4 billion.

Did you know? The initial tension between Musk and Zuckerberg culminated in a public challenge to a cage fight, a spectacle that ultimately did not materialize.

Pro Tip: Stay informed about the latest developments in AI by following reputable tech news sources and industry publications.

Want to learn more about the evolving dynamics of the AI industry? Explore our other articles on artificial intelligence and subscribe to our newsletter for the latest insights.