California Governor Gavin Newsom has signed an executive order requiring artificial intelligence companies seeking state government contracts to demonstrate robust public safety measures. The move is a direct challenge to a recent executive order from President Donald Trump, issued in December, which urged states to refrain from regulating AI companies, arguing such regulations would stifle innovation and cede ground in the global tech race.

President Trump in March explicitly asked states to avoid implementing AI-specific regulations, warning of potential lawsuits from the U.S. Attorney General via a newly formed AI task force. But Newsom’s order, signed Monday, signals a growing defiance among state leaders who believe proactive safeguards are essential. Several states, including Utah and Colorado, have seen earlier attempts at AI regulation stalled after White House criticism.

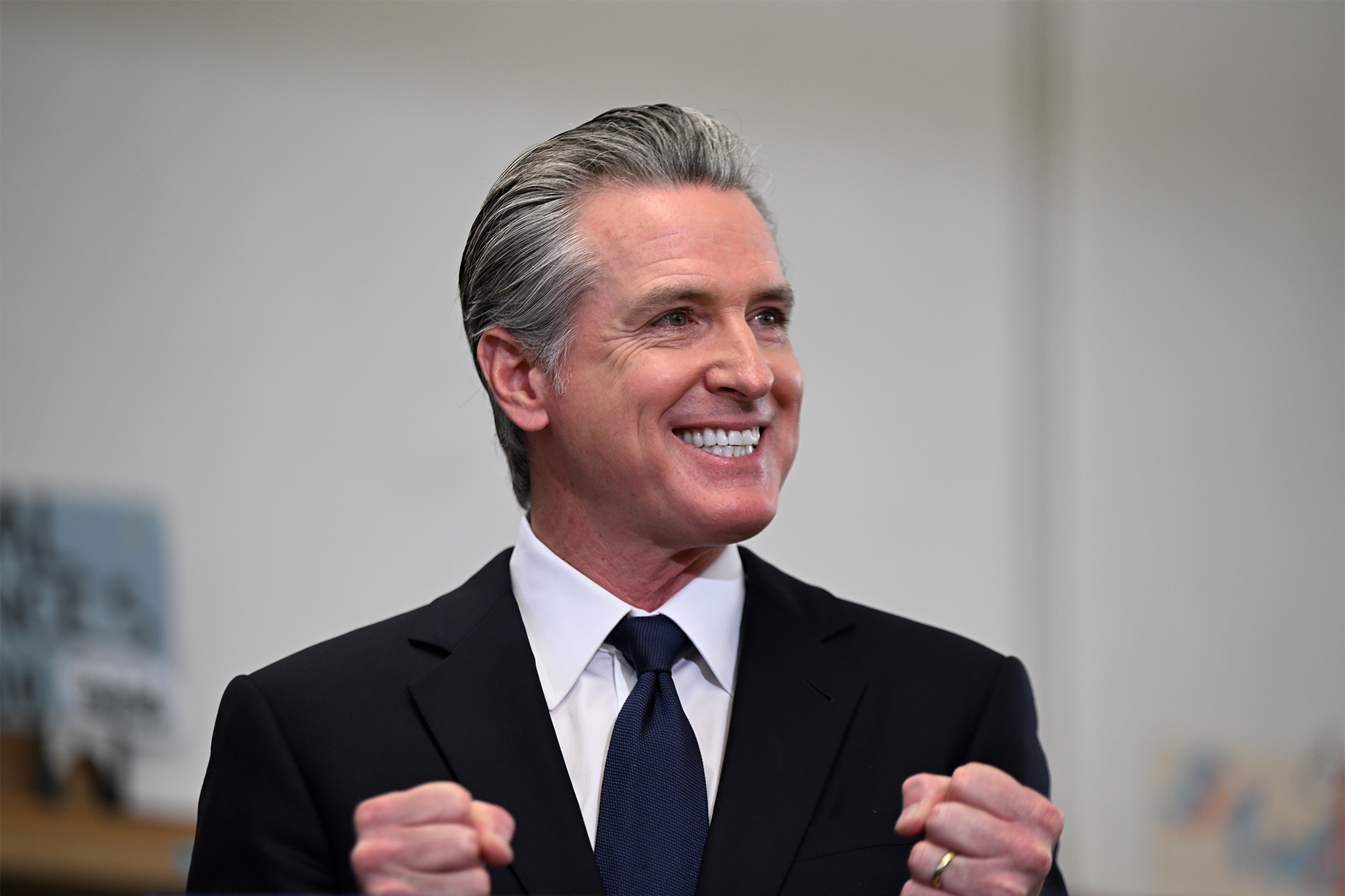

Newsom’s approach, as his office stated, prioritizes safety standards, preventing misuse of AI technologies and mitigating risks to consumers. “California’s always been the birthplace of innovation. But we also understand the flip side: in the wrong hands, innovation can be misused in ways that put people at risk,” Newsom said in a statement. He emphasized California’s leadership in AI and its commitment to protecting rights rather than exploiting them.

The executive order mandates that companies bidding on California state contracts provide comprehensive safety and privacy protections related to artificial intelligence. The state will also scrutinize any potential surveillance capabilities or impacts on free speech embedded within these technologies. California will review whether companies have been flagged as “supply chain risks” by the federal government, allowing procurement to continue if the state determines no significant risk exists.

This order arrives amid a legal battle between Anthropic, a San Francisco-based AI company, and the U.S. Department of Defense. Anthropic is suing the DoD after being labeled a “supply chain risk,” a designation the company disputes.

To combat the spread of misinformation, the California Department of Technology will develop recommendations and best practices for watermarking AI-generated videos, aiming to clearly distinguish them from authentic content. Companies have four months to comply with the new requirements.

What does this executive order actually require of AI companies?

The order compels AI companies seeking state contracts to demonstrate adherence to strong safety standards, prevent the misuse of their technologies, and proactively address potential risks to consumers. This includes providing detailed information about their AI systems’ safety protocols, privacy safeguards, and potential impacts on free speech. The state will also assess whether a company has been identified as a supply chain risk by the federal government.

What prompted this action from Governor Newsom?

Newsom’s move is a direct response to the Trump administration’s efforts to limit state-level regulation of AI. The Governor views the federal approach as insufficient to protect Californians from potential harms associated with rapidly evolving AI technologies. He believes California must take a proactive role in ensuring responsible AI development and deployment.

Could this lead to legal challenges?

It’s possible. The Trump administration has signaled its willingness to pursue legal action against states that enact AI regulations it deems overly restrictive. However, the specifics of California’s order – focusing on companies doing business *with the state* – may offer some legal buffer. The outcome will likely depend on how aggressively the federal government chooses to challenge the order and how California defends its right to protect its citizens.

What’s the broader significance of this conflict between California and the federal government?

This represents a significant fault line in the debate over AI governance. California’s action underscores a growing belief among some states that federal oversight is lagging behind the pace of technological development and that states must step in to protect their residents. It sets the stage for a potential showdown between state and federal authority over the future of AI regulation.

As AI continues to rapidly evolve, will other states follow California’s lead in prioritizing consumer protection and responsible innovation, or will the federal government’s approach of minimal regulation ultimately prevail?