The Year 2000 problem—better known as Y2K—wasn’t just a glitch; it was a global exercise in preventative maintenance that cost billions of dollars and thousands of man-hours. At its core, Y2K was a failure of foresight in data architecture, where programmers used two digits for years to save precious memory. Now, as we enter the era of Large Language Models (LLMs) like GPT-4, Claude, and Gemini, we are seeing a fundamental shift in how we handle the incredibly “legacy code” that caused the Y2K panic in the first place.

The Legacy Code Paradox

If we imagine a world where LLMs existed in 1998, the Y2K crisis would have looked entirely different. The panic of the late 90s was driven by the sheer scale of the manual audit. Engineers had to hunt through millions of lines of COBOL and Fortran, manually identifying date-handling logic that would fail when the clock struck midnight on January 1, 2000. It was a needle-in-a-haystack problem on a global scale.

Modern LLMs are essentially pattern-recognition engines on a massive scale. Their ability to ingest entire repositories of legacy code, identify deprecated patterns, and suggest refactored versions of a function happens in seconds, not months. A model today doesn’t just “uncover” a bug; it understands the intent of the original programmer and can propose a fix that maintains the system’s integrity while updating the date format.

Technical Context: The Y2K Root Cause

Early computers had limited memory. To save space, programmers used two digits for years (e.g., “98” for 1998). The “bug” occurred because systems would interpret “00” as 1900 rather than 2000, potentially crashing financial systems, power grids, and air traffic control that relied on date-based calculations.

From Manual Audits to Automated Refactoring

The intersection of AI and legacy systems is where the real business value lies today. Many global banks and government agencies still run on mainframe systems written decades ago. The risk isn’t just a date flip anymore; it’s the “knowledge gap.” The people who wrote the original code are retiring, and the documentation is often non-existent.

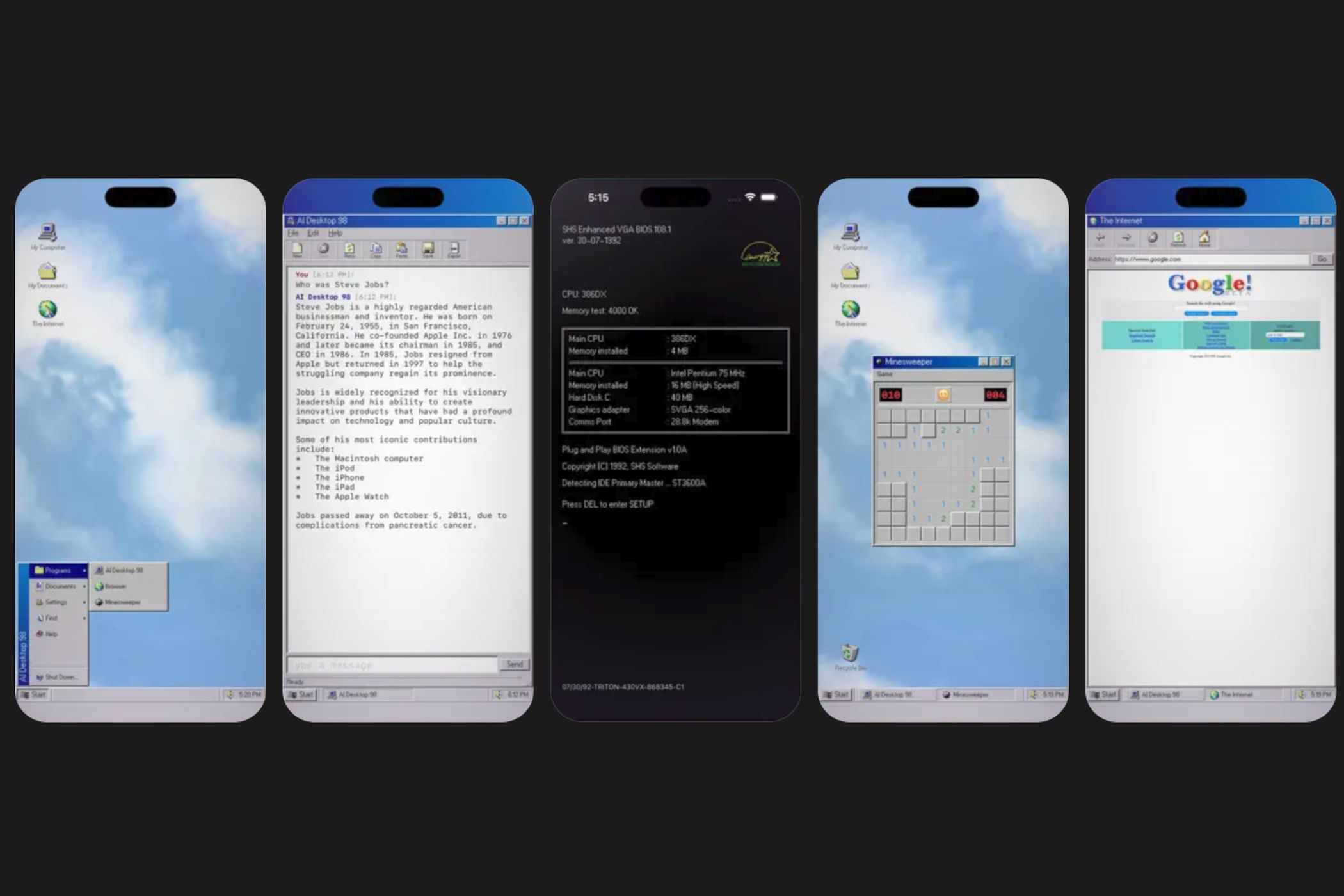

This is where the “what if” of the Y2K scenario becomes a current reality. Developers are now using AI to:

- Translate Legacy Logic: Converting COBOL into Java or Python while preserving the business logic.

- Synthetic Documentation: Using LLMs to analyze undocumented code and generate technical manuals.

- Regression Testing: Predicting how a change in an traditional system will affect modern APIs.

For the developer, this shifts the role from “digital archaeologist” to “systems architect.” Instead of spending weeks tracing a variable through a 40-year-old codebase, they can use AI to map the dependencies and focus on the high-level migration strategy.

The stakes here are high. A failure in legacy migration can lead to systemic outages, but the cost of doing nothing—maintaining “zombie” systems—is an unsustainable technical debt that slows down innovation across the entire financial and public sectors.

The Fresh Risk: AI-Generated Technical Debt

While LLMs could have solved Y2K, they introduce a new irony. We are currently producing code at a velocity that far exceeds our ability to audit it. When a developer uses an LLM to generate a complex module in seconds, they are creating a new form of legacy code. If that AI-generated code contains subtle hallucinations or security vulnerabilities, we are simply building the “Y2K bug” of 2040.

The analytical discipline required now isn’t just about writing code, but about verifying it. The “human in the loop” is no longer just a quality check; it is the only safeguard against a future where we have billions of lines of AI-written code that no human actually understands.

Quick Analysis: AI vs. Legacy Systems

Q: Can AI completely replace the necessitate for legacy experts?

A: No. AI can identify patterns and suggest fixes, but it lacks the institutional context of why a specific (and seemingly odd) logic path was chosen in 1975. Human oversight remains critical for validation.

Q: What is the primary risk of using LLMs for code migration?

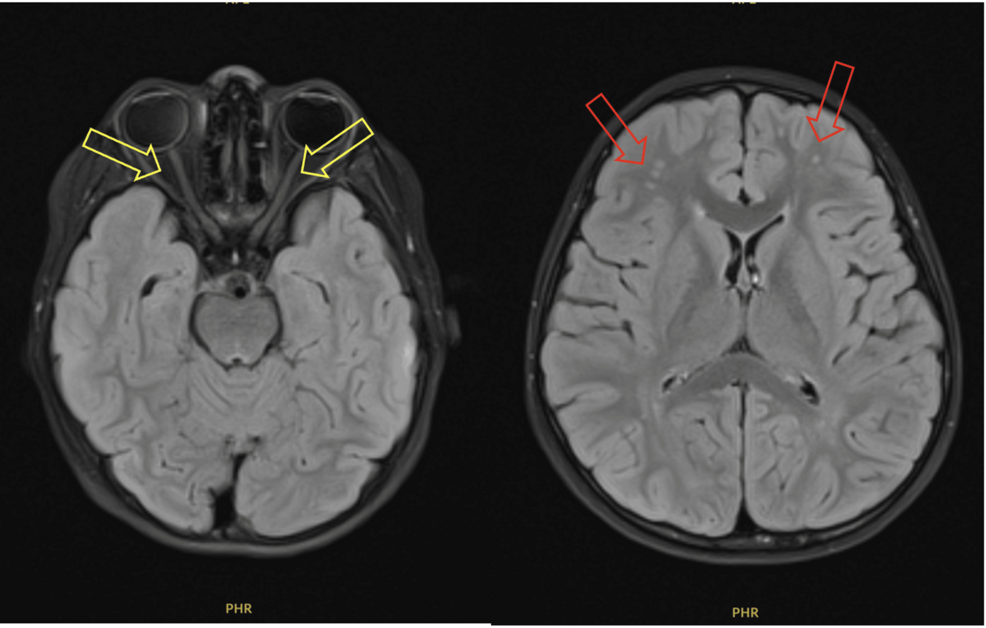

A: Hallucinations. An LLM might confidently suggest a refactored function that looks correct but fails in a specific edge case, which could be catastrophic in a banking or medical system.

As we move further away from the analog era, we have to wonder: are we using AI to solve the mistakes of the past, or are we just accelerating the rate at which we make new ones?