The Era of the “Personal AI”: Why Small is the New Massive

For the past few years, the AI narrative has been dominated by “bigger is better.” We’ve seen a race toward trillion-parameter models that require warehouse-sized data centers and astronomical electricity bills to function. But a quiet shift is happening. We are entering the era of Small Language Models (SLMs) and the democratization of AI architecture.

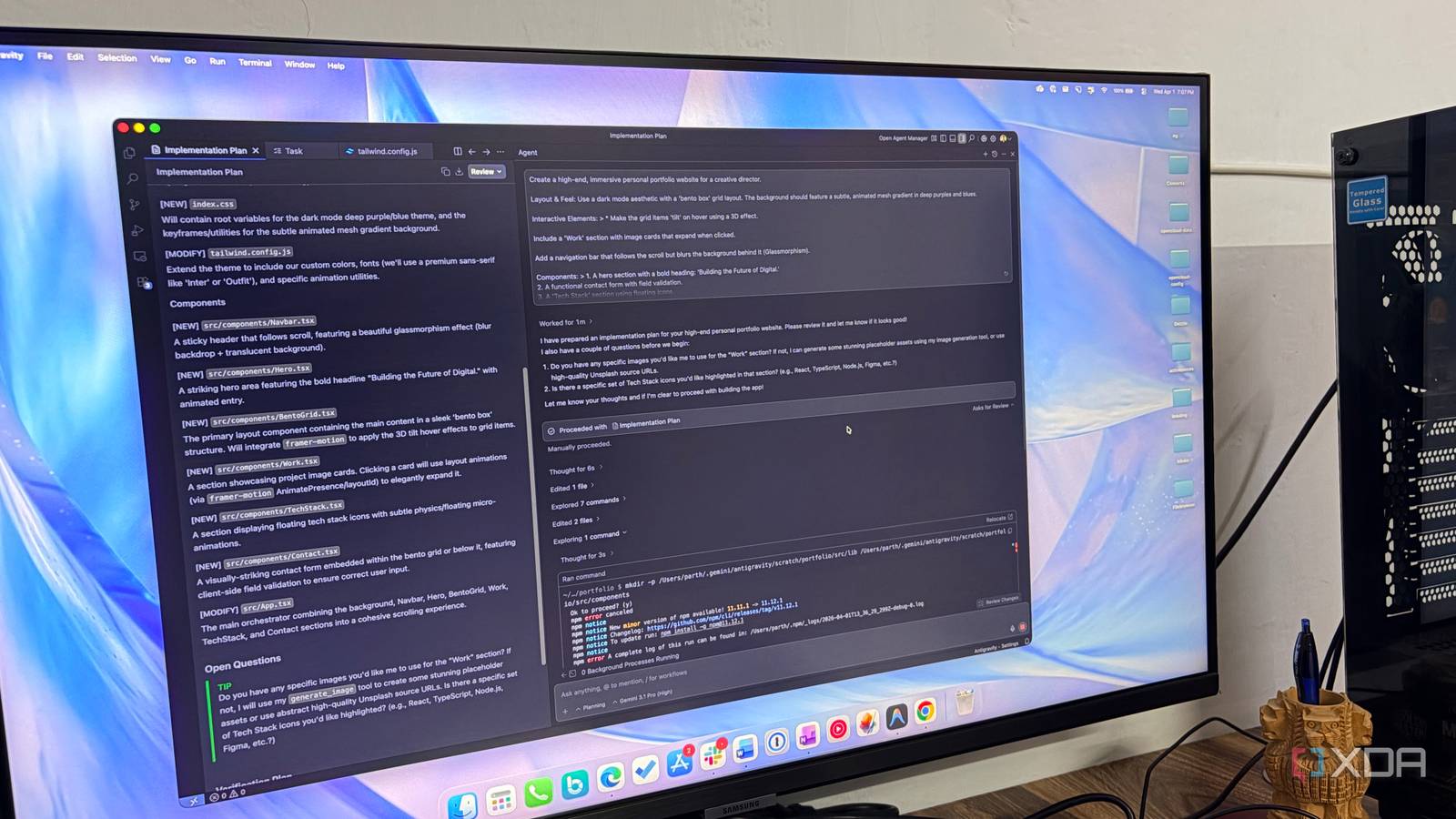

The emergence of DIY initiatives, such as the “LLM From Scratch” workshop, signals a move away from treating AI as a “black box” provided by a few tech giants. Instead, there is a growing movement toward local, transparent, and highly specialized models that can run on a standard consumer laptop.

This trend isn’t just about hobbyism. it’s about autonomy. When you can build and run a model locally, you eliminate the “cloud tax”—the subscription fees and privacy risks associated with sending your most sensitive data to a remote server.

From Prompt Engineering to Model Architecture: The New Literacy

In the early days of the internet, “computer literacy” meant knowing how to use a word processor. In the 2010s, it shifted toward coding and data analysis. Today, we are seeing the birth of a new form of literacy: AI Architecture.

While “prompt engineering” (the art of talking to an AI) is useful, the real power lies in understanding the underlying machinery—tokenization, the Transformer architecture, and the training loop. When users move from being “consumers” to “builders,” the potential for innovation explodes.

We are likely to see a future where “AI Literacy” is a core requirement in education. Imagine a world where students don’t just use an AI to write an essay, but build a miniature, specialized model to analyze the themes of a specific novel or simulate a historical conversation.

Edge AI and the Death of Cloud Dependency

The most significant hardware trend is the move toward “Edge AI.” This is the practice of running AI models directly on the device (phone, laptop, or IoT device) rather than in the cloud. With the rise of specialized NPU (Neural Processing Unit) chips in modern processors, the hardware bottleneck is vanishing.

Real-world applications of this are already appearing. Local LLMs can now handle real-time translation, personalized health monitoring, and secure document analysis without an internet connection. This solves the “latency problem”—the annoying lag between sending a prompt and receiving an answer.

As we move forward, expect “Hybrid AI” ecosystems. Your device will use a tiny, fast local model for 90% of daily tasks and only “ping” a massive cloud model for complex, compute-heavy reasoning. This optimizes speed, cost, and battery life.

Hyper-Specialization: The Rise of the “Niche” Model

The “General Purpose” AI is great for variety, but “Specialized” AI is better for precision. We are moving toward a future of hyper-specialization, where instead of one giant model that knows everything, we have thousands of tiny models that know one thing perfectly.

Consider the “AI Poet” concept from the “LLM From Scratch” course. While ChatGPT can write a poem, a model trained exclusively on the works of 19th-century Romantic poets will capture the nuance, meter, and vocabulary of that era with far greater authenticity.

This trend will extend to professional fields:

- Legal AI: Models trained only on case law for a specific jurisdiction.

- Medical AI: Models specialized in radiology reports to assist doctors.

- Coding AI: Local models trained on a company’s private codebase to provide hyper-accurate suggestions without leaking IP.

Frequently Asked Questions

Can I actually build an LLM on a normal laptop?

Yes, provided you are building a “tiny” model for educational purposes. While you can’t train a GPT-4 clone, you can certainly build a functional model that can generate text or poetry using a framework like PyTorch or TensorFlow.

What is the difference between an LLM and an SLM?

An LLM (Large Language Model) has billions of parameters and requires massive compute. An SLM (Small Language Model) has fewer parameters, is trained on more curated data, and can run on local hardware while remaining highly efficient for specific tasks.

Do I need to be a math genius to learn AI architecture?

Not anymore. While linear algebra and calculus are the foundations, many modern courses and repositories (like those on GitHub) provide the conceptual bridge, allowing you to learn by coding and experimenting rather than just studying theory.

Ready to stop prompting and start building?

The shift toward local, open-source AI is happening now. Whether you’re a hobbyist or a professional, the best way to predict the future of AI is to build it yourself.

Do you think local AI will eventually replace cloud-based giants? Let us know in the comments below or subscribe to our newsletter for more deep dives into the world of DIY tech!