The End of Bulky Gear: How Perception Chips are Redefining Wearable Tech

For years, the dream of “true” smart glasses—frames that look like ordinary eyewear but possess the intelligence of a supercomputer—has been stalled by a stubborn wall of physics. You either get sleek frames with limited power (like the Ray-Ban Meta) or immense power in a headset that feels like a brick on your face (like the Apple Vision Pro).

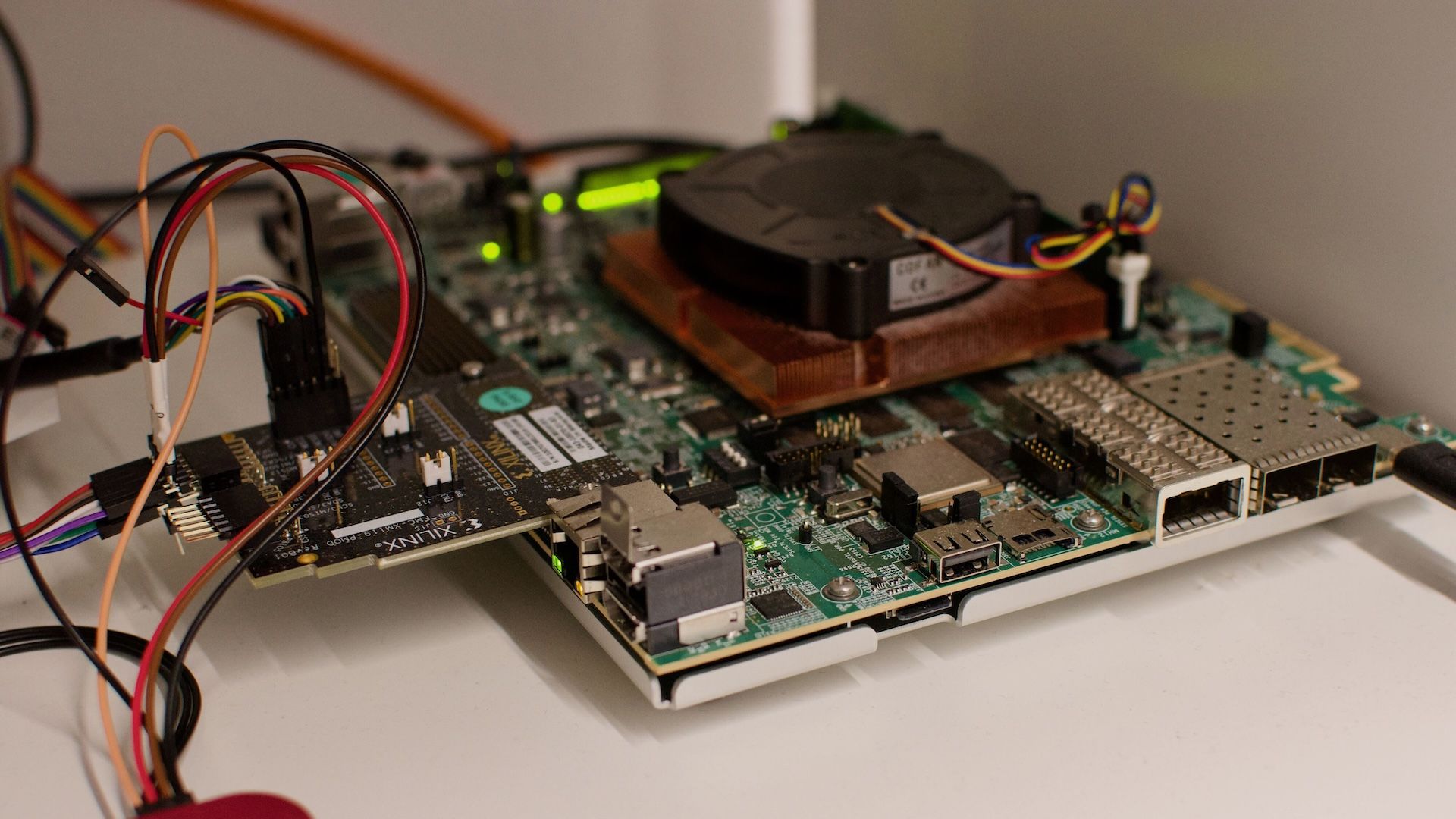

The bottleneck isn’t the software; it’s the silicon. Traditional CPUs and GPUs are power-hungry beasts that generate immense heat, requiring large batteries and bulky cooling systems. Enter the era of Perception Chips.

What Exactly is a Perception SoC?

A System-on-Chip (SoC) dedicated to perception is essentially a specialized brain designed for one thing: understanding the environment in real-time. While a general-purpose processor handles everything from emails to gaming, a perception chip focuses on spatial intelligence.

Swiss startup Mosaic is leading this charge with a multi-core architecture (utilizing eight or more cores based on ARM) that maximizes performance per watt. Instead of relying on a smartphone to do the heavy lifting via Bluetooth—which drains two batteries at once—these chips handle object recognition, position tracking, and scene understanding locally on the device.

The Three Pillars of Spatial Intelligence

- Real-Time Environmental Awareness: The ability to map a room and identify obstacles instantly.

- Object Recognition: Distinguishing between a coffee cup and a smartphone without lagging.

- Scene Understanding: Contextualizing the world (e.g., knowing that a “door” is an exit and a “table” is a surface).

Future Trend: The Shift Toward “Invisible” Computing

The trajectory of wearable tech is moving away from “gadgets” and toward “ambient computing.” We are heading toward a future where the hardware disappears, leaving only the experience.

By reducing the thermal footprint and battery requirements, perception chips enable form-factor miniaturization. Imagine glasses that provide real-time translation or navigation overlays without needing a thick temple arm to house a massive battery. This is the “holy grail” of Augmented Reality (AR).

Beyond the Glasses: Smartphones and the IoT

While smart glasses are the headline, the impact of perception silicon extends to our pockets. Current smartphones often struggle with “always-on” camera features because they kill the battery.

Integrating a dedicated perception chip into a smartphone allows for continuous tracking and classification of the environment with minimal energy draw. This could lead to phones that “know” where they are and what they are looking at even when the main processor is asleep, enabling a new wave of context-aware applications.

From Hardware Supplier to Ecosystem Platform

The most significant trend is the transition from selling chips to building platforms. Companies like Mosaic aren’t just shipping silicon; they are providing the full application layer. This means developers can create apps specifically optimized for this hardware, creating a virtuous cycle of efficiency and utility.

Frequently Asked Questions

Q: How do perception chips differ from standard AI chips?

A: Standard AI chips are often designed for general tasks (LLMs, image generation). Perception chips are optimized specifically for spatial data—processing visual and sensory input in real-time with extreme energy efficiency.

Q: Will this make VR headsets obsolete?

A: Not necessarily. VR requires high-fidelity rendering (GPU heavy). Perception chips primarily enhance AR (Augmented Reality) and “Smart” wearables, where the goal is to blend digital info with the real world.

Q: Why is the “multi-core” approach better?

A: By spreading the workload across many small, specialized cores rather than one giant core, the chip can achieve higher performance while using significantly less power (higher performance-per-watt).

Join the Conversation

Do you think we’ll be wearing smart glasses instead of carrying smartphones by 2030? Or is the “bulk” of current headsets a dealbreaker for you?

Let us know in the comments below or subscribe to our newsletter for the latest in semiconductor breakthroughs!