Breaking the Chains of Proprietary AI

For years, the enterprise AI landscape has been dominated by a few giants. While tools like ChatGPT Enterprise and Microsoft Copilot offer immense power, they come with a significant trade-off: vendor lock-in and the constant risk of sensitive company data flowing through third-party systems.

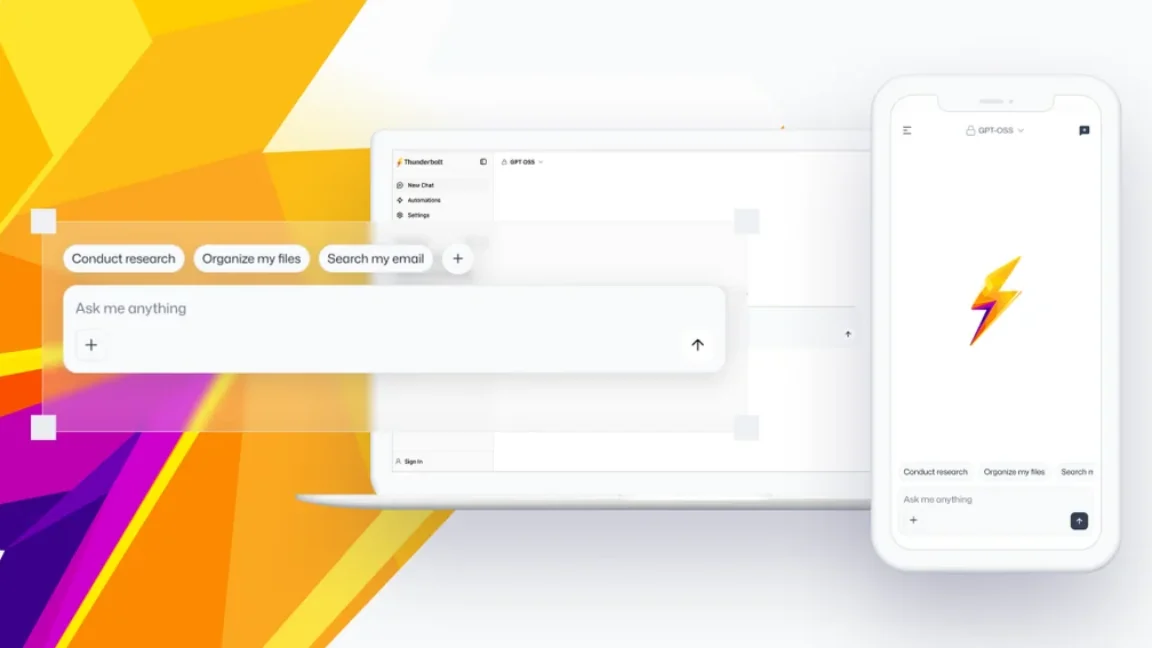

The emergence of “sovereign AI clients,” exemplified by Mozilla’s Thunderbolt, marks a pivotal shift. Instead of relying on a closed-loop cloud service, organizations are now moving toward self-hosted infrastructure. This approach allows businesses to maintain total control over their AI stack, ensuring that data privacy isn’t just a policy promise, but a technical reality.

By prioritizing sovereignty, the industry is moving toward a future where the AI interface is decoupled from the AI model. So a company can change its underlying LLM without having to rebuild its entire workflow or migrate its data to a new proprietary ecosystem.

The Power of a Modular AI Stack

The next era of AI isn’t about finding one “perfect” model, but about building a modular pipeline. Thunderbolt leverages deepset’s Haystack framework to allow users to construct custom AI pipelines from components they choose themselves.

This modularity is supported by open protocols that are becoming the new standard for AI interoperability:

- Agent Client Protocol (ACP): Allows the client to plug into various compatible agents.

- Model Context Protocol (MCP): Enables seamless connection to MCP servers for better data integration.

- API Flexibility: Compatibility with OpenAI-compatible APIs, including Claude, Codex, OpenClaw, DeepSeek, and OpenCode.

This flexibility ensures that enterprises can mix and match frontier models with local, open-source models depending on the sensitivity of the task at hand. For more on how these frameworks operate, check out our guide on modular AI frameworks.

Data Sovereignty and the Local “Source of Truth”

One of the most significant trends in enterprise AI is the move toward localized data referencing. Rather than uploading entire corporate knowledge bases to a cloud provider, new tools are integrating with locally stored enterprise data through open protocols.

The use of an offline SQLite database allows the AI to reference critical information without that data ever leaving the organization’s controlled environment. When combined with optional end-to-end encryption and device-level access controls, the security profile changes from “trusting the provider” to “trusting the architecture.”

This shift is essential for industries with strict compliance requirements. When the entire stack—from the interface to the model and the database—is self-hosted, the “black box” of proprietary AI is replaced by a transparent, auditable system.

Building a Decentralized AI Ecosystem

Mozilla has expressed a vision to do for AI what it previously did for the web: create a decentralized, open-source ecosystem. This involves moving away from centralized “Big AI” and toward a diverse landscape of tools, and agents.

The availability of native apps across Windows, macOS, Linux, iOS, and Android, along with the ability to build from React source code via GitHub, ensures that this ecosystem remains accessible and extensible.

As we move forward, we can expect to see more tools that support “cross-device workflows,” allowing users to move their AI-driven research, automation, and chat sessions seamlessly between mobile and desktop without being tethered to a single vendor’s cloud sync.

For those interested in the broader movement toward open tooling, the Mozilla.ai initiative continues to back the development of open-source models and agents that challenge the current monopoly of proprietary AI.

Frequently Asked Questions

What is a sovereign AI client?

A sovereign AI client is an open-source interface that allows users to run AI on their own self-hosted infrastructure, giving them full control over their data and the models they use.

Which models are compatible with Thunderbolt?

It supports OpenAI-compatible APIs, including Claude, Codex, OpenClaw, DeepSeek, and OpenCode, as well as local and open-source models.

How does Thunderbolt ensure data privacy?

It enables self-hosted deployment, utilizes local SQLite databases for a “source of truth,” and offers optional end-to-end encryption and device-level access controls.

Can Thunderbolt be used on mobile devices?

Yes, native applications are available for iOS and Android, in addition to Windows, Mac, Linux, and the web.

Join the Conversation

Do you believe self-hosted AI is the future for enterprises, or will the convenience of cloud-based “Big AI” always win? Let us know your thoughts in the comments below or subscribe to our newsletter for the latest in open-source tech!