The Unconscious Mind: More Active Than We Thought

For decades, we viewed general anesthesia as a “light switch”—a state where the brain effectively goes offline, pausing the complex machinery of thought, language and perception. However, groundbreaking research into the human hippocampus is flipping this narrative on its head.

Recent data reveals that even when a patient is under total intravenous anesthesia (using agents like propofol), the brain doesn’t stop processing. In fact, it continues to perform “oddball discrimination”—the ability to detect a rare stimulus amidst a sea of repetitive ones. This suggests that the brain’s capacity for pattern recognition remains intact, even when our conscious awareness is completely extinguished.

This discovery opens a massive door for the future of neuroscience. If the brain can learn and adapt without consciousness, we must redefine what “awareness” actually is. Are we merely the observers of a process that happens automatically in the background?

Decoding the Silent Language of the Brain

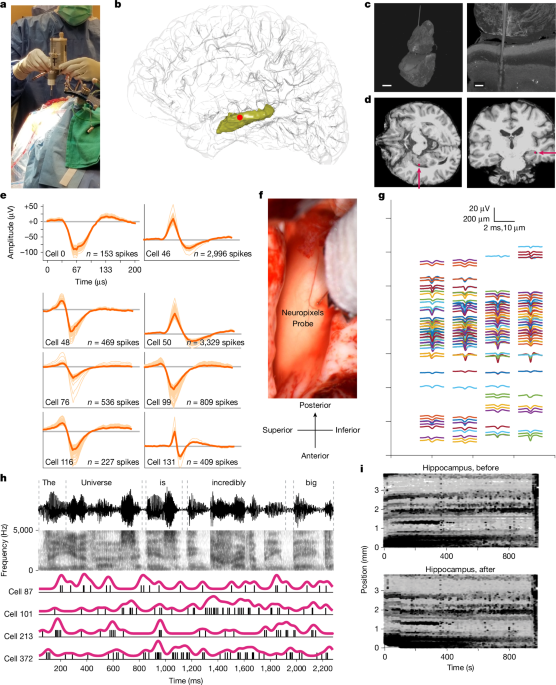

Perhaps the most startling revelation is the brain’s relationship with language during anesthesia. By playing podcasts to patients and recording neural activity via high-density Neuropixels probes, researchers found that the hippocampus still tracks semantic and grammatical features of speech.

The brain wasn’t just “hearing” noise; it was processing meaning. More incredibly, the neural signatures could actually predict upcoming words in a sentence. This “online prediction” is a hallmark of high-level cognition, yet it persisted in a state of induced unconsciousness.

The Rise of Real-Time Neural Decoding

This capability paves the way for a future where we can decode thoughts and language directly from the brain without the need for verbal output. Imagine a world where patients in comas or those with locked-in syndrome can communicate their needs because we can “read” the semantic processing happening in their hippocampus.

By utilizing tools like Support Vector Machines (SVM) and Word2Vec embeddings, scientists are already mapping how specific semantic categories—such as “emotional words” or “social words”—trigger distinct neural firing patterns. The transition from laboratory research to clinical application is closer than we think.

From Lab to Life: The Future of Precision Medicine

The implications of this research extend far beyond theoretical curiosity. We are looking at a paradigm shift in how we handle surgery, and anesthesia. Currently, anesthesiologists use BIS (Bispectral Index) monitors to estimate the depth of unconsciousness. However, these are proxies.

Future trends suggest a move toward Neural Signature Monitoring. By monitoring the hippocampus’s response to specific stimuli, doctors could determine the exact level of consciousness in a patient, reducing the risk of “intraoperative awareness”—the nightmare scenario where a patient becomes conscious during surgery but cannot move.

The Synergy of AI and Biology

The use of Recurrent Neural Networks (RNNs) to mirror human hippocampal activity is another frontier. By training AI to perform the same “oddball detection” tasks as the human brain, we are creating a digital twin of human cognition. This could lead to:

- Advanced Neuro-prosthetics: Devices that don’t just move a limb but “understand” the intent and context of the movement.

- Cognitive Restoration: Using AI-driven stimulation to “re-teach” the hippocampus how to process language after a stroke or traumatic brain injury.

- Enhanced Learning: Understanding the mechanics of representational plasticity to develop new ways of accelerating human learning.

For more on how technology is merging with biology, check out our deep dive into the evolution of Brain-Computer Interfaces.

Frequently Asked Questions

Can we actually “think” while under anesthesia?

While you aren’t “aware” in the traditional sense, your brain continues to process complex information, recognize patterns, and even predict language. It is a form of subconscious processing that operates independently of conscious experience.

What are Neuropixels probes?

Neuropixels are ultra-high-density electrodes that allow scientists to record the activity of hundreds of individual neurons simultaneously, providing a high-resolution map of brain activity.

How does the brain predict words while unconscious?

The hippocampus uses previous context to anticipate what comes next. This is a fundamental property of the brain’s architecture, which remains active even when the “conscious” layers of the cortex are suppressed by anesthesia.

Join the Conversation

Does the idea of your brain “thinking” while you’re asleep or under anesthesia fascinate you—or creep you out? We want to hear your thoughts on the future of neural decoding!

Leave a comment below or subscribe to our newsletter for the latest breakthroughs in neuroscience and AI.