The Limits of AI Insight: Why Powerful Tools Still Need Human Understanding

Artificial intelligence is rapidly changing the landscape of problem-solving, achieving feats once considered science fiction. Recent advancements demonstrate AI’s ability to tackle complex challenges, like redesigning proteins. However, a critical question remains: can AI not only do, but also explain? Emerging research suggests that while AI can generate impressive results, understanding the ‘why’ behind its decisions is proving remarkably difficult.

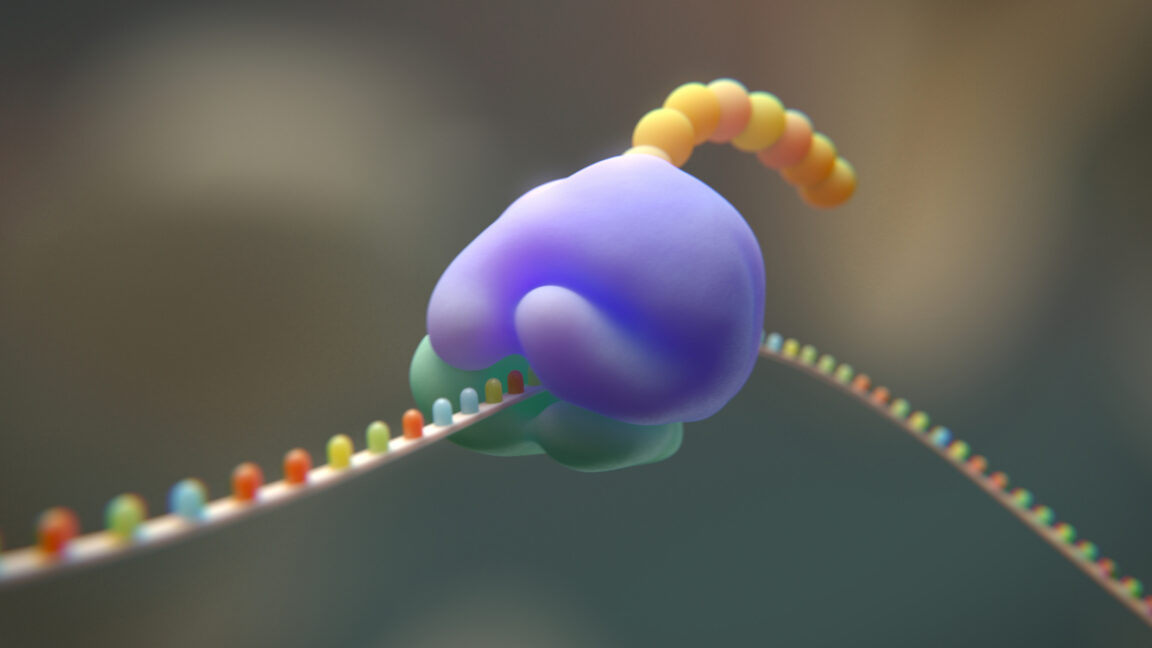

AI’s Astonishing Protein Redesign Capabilities

A recent study highlighted the potential of AI in protein engineering. Researchers successfully used AI to craft “radical changes” to protein structures, a process that would typically accept billions of years through natural evolution. This achievement is particularly noteworthy considering the intricate interactions proteins have with other cellular components – ribosomal RNAs, transfer RNAs, messenger RNAs, and other proteins. The speed at which AI accomplished this feat is, as researchers noted, “mind-blowing.”

The “Black Box” Problem and the Need for Transparency

Despite these successes, a significant hurdle remains: the lack of transparency in AI’s decision-making process. Current AI models often function as “black boxes,” delivering solutions without revealing the reasoning behind them. This opacity presents challenges. For instance, when presented with a problem, different AI models may arrive at different solutions, and researchers struggle to determine why these variations occur. Are the models exploring genuinely different approaches, or are their discrepancies rooted in mathematical quirks?

This isn’t merely an academic concern. In fields like healthcare and finance, understanding the rationale behind an AI’s decision is crucial for building trust and ensuring accountability. Imagine an AI-powered diagnostic tool recommending a specific treatment. Without knowing why it made that recommendation, doctors may be hesitant to rely on its judgment.

Reasoning Backwards: A Difficult Task

Researchers are attempting to decipher AI’s internal logic by “reasoning backward” from its outputs. However, this process is often frustratingly opaque. In one instance, an AI redesigned an entire structural element of a protein – an alpha helix – for reasons researchers couldn’t even commence to guess. This highlights the limitations of inferring intent from AI’s actions.

The Future of AI: Prioritizing Explainability

The current emphasis in AI development has understandably been on achieving functionality – creating systems that simply *operate*. However, the growing recognition of the “black box” problem suggests a shift is needed. Future research should prioritize developing AI models that are more transparent and explainable. This could involve designing algorithms that provide insights into their decision-making processes or developing tools that allow users to interrogate AI’s reasoning.

For now, AI should be viewed as a powerful tool, capable of augmenting human capabilities but not replacing human understanding. We are still reliant on our own neural networks to interpret phenomena and make informed judgments.

Pro Tip

When evaluating AI-driven solutions, always ask: “What is the reasoning behind this recommendation?” Don’t accept answers like “the AI said so.” Demand transparency and a clear explanation of the factors influencing the decision.

FAQ: AI Decision-Making

Q: What is the “black box” problem in AI?

A: It refers to the difficulty in understanding how AI models arrive at their decisions, as their internal processes are often opaque.

Q: Why is explainability important in AI?

A: Explainability builds trust, ensures accountability, and allows humans to validate and improve AI’s performance.

Q: Can AI truly “think” like a human?

A: Current AI models excel at pattern recognition and data analysis, but they lack the nuanced reasoning, common sense, and contextual understanding of human thought.

Q: What are the potential applications of AI in protein engineering?

A: AI can accelerate the design of new proteins with specific functions, leading to advancements in medicine, materials science, and biotechnology.

Desire to learn more about the latest advancements in artificial intelligence? Explore IBM’s insights on AI decision-making and stay ahead of the curve.

Share your thoughts on the future of AI in the comments below!