Cracking the Protein Code: The Shift Toward Explainable AI in Bio-Engineering

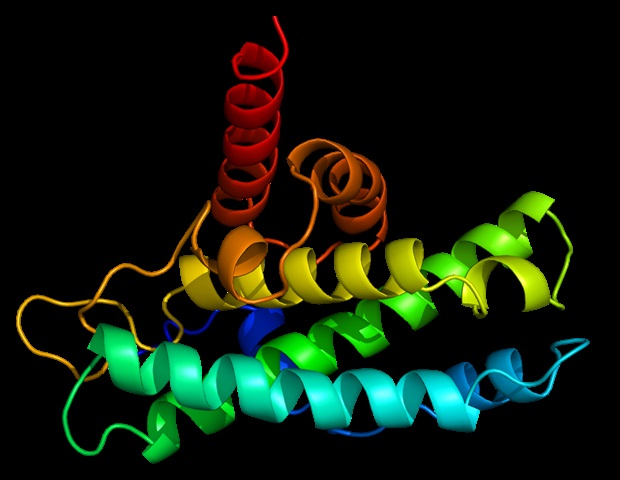

Protein language models (pLMs) are fundamentally changing how we approach biotechnology. These AI tools allow scientists to engineer proteins with useful properties, creating entirely new structures that have never existed in nature. From synthesizing enzymes that can scrub carbon dioxide from the atmosphere to developing industrial catalysts that slash energy consumption and toxic waste, the potential is staggering.

However, a critical hurdle remains: the “black box” problem. While these models can predict a protein’s structure or function with uncanny accuracy, they rarely explain why they reached that conclusion. As pLMs begin to drive real-world biotech decisions, the need for “explainable AI” (XAI) has moved from a luxury to a necessity.

Decoding the Decision: Where Does the Explanation Live?

To move beyond the black box, researchers at the Centre for Genomic Regulation (CRG) suggest that we must identify exactly where a model’s predictive decision originates. According to a perspective paper published in Nature Machine Intelligence, there are four critical areas to investigate:

- Training Data: Analyzing the data the model learned from can reveal biases, such as a lack of human genetic diversity or insufficient data on specific human proteins.

- Protein Sequences: Much like a real estate model looks at square footage or location, pLMs look at specific amino acids or regions of a protein to determine which influenced the prediction most.

- Model Architecture: What we have is the equivalent of “opening the hood” of a car to check the engine, ensuring the artificial neurons are processing information correctly.

- Input-Output Behavior: By “nudging” the model—slightly altering a protein sequence or the question asked—researchers can observe how the answer changes to understand the model’s logic.

The Evolution of AI Roles: From Evaluator to Teacher

Currently, explainability in protein research is largely used for verification rather than discovery. The researchers have categorized the roles of XAI into a hierarchy of sophistication:

The Current Standard: Evaluators and Multitaskers

Most current studies use XAI as an Evaluator, checking if the AI recognizes patterns biologists already know, such as structural motifs or binding sites. A smaller group uses AI as a Multitasker, reapplying those signals to annotate new proteins or predict additional properties.

The Emerging Frontier: Engineers and Coaches

A limited number of studies are pushing further, using XAI as an Engineer or Coach. In these roles, insights are used to trim unnecessary model components or redesign architectures to steer the AI toward generating sequences with specific, desired traits.

The Holy Grail: The “Teacher” Model

The most ambitious goal is the Teacher model. This would be an AI capable of revealing entirely new biological rules regarding molecular interaction and protein folding. As Dr. Noelia Ferruz, Group Leader at the CRG, explains, the ultimate goal is controllable protein design.

“Imagine being able to tell a model: ‘Design a protein with this shape, active at this pH,’ and not only receive a candidate sequence, but also a clear explanation of why that design should work, and importantly, why alternatives would fail,” says Dr. Ferruz.

The Road to Trustworthy Bio-Design

Moving toward a “Teacher” status won’t happen by accident. Today’s models are powerful pattern recognizers, but they often rely on statistical correlations rather than a true understanding of biology. To bridge this gap, the research community is calling for three major shifts:

- Robust Benchmarks: Creating frameworks to test whether an AI’s explanation actually reflects its internal reasoning.

- Open-Source Tooling: Making explainability tools accessible across different labs to ensure results are comparable.

- Laboratory Validation: Ensuring that every “insight” provided by the AI is tested in a real-world biological environment.

Without these safeguards, we risk building powerful tools that we cannot fully trust. As Andrea Hunklinger, first author of the CRG paper, notes, “If we want protein language models to become a reliable partner in discovery and design, explainability must not be an afterthought.”

Frequently Asked Questions

What is a Protein Language Model (pLM)?

It is an AI tool that treats protein sequences like a language, allowing researchers to engineer proteins with specific properties or create entirely new structures.

Why is “explainability” important in biotechnology?

Because many AI models act as “black boxes,” it is demanding to know if a prediction is biased, unreliable, or unsafe. Explainable AI (XAI) allows humans to understand and trust the decision-making process.

What would a “Teacher” AI model be able to do?

A Teacher model would go beyond pattern recognition to reveal new biological principles, such as new rules for protein folding or catalysis, effectively teaching scientists something they didn’t previously know.

Join the Conversation: Do you believe AI will eventually replace traditional physics-based models in protein design, or will the “black box” problem always require a human in the loop? Let us know your thoughts in the comments below or subscribe to our newsletter for more insights into the future of medical AI.