The Rise of the AI Orchestra: Why NVIDIA’s Huang Says Open and Proprietary AI Must Coexist

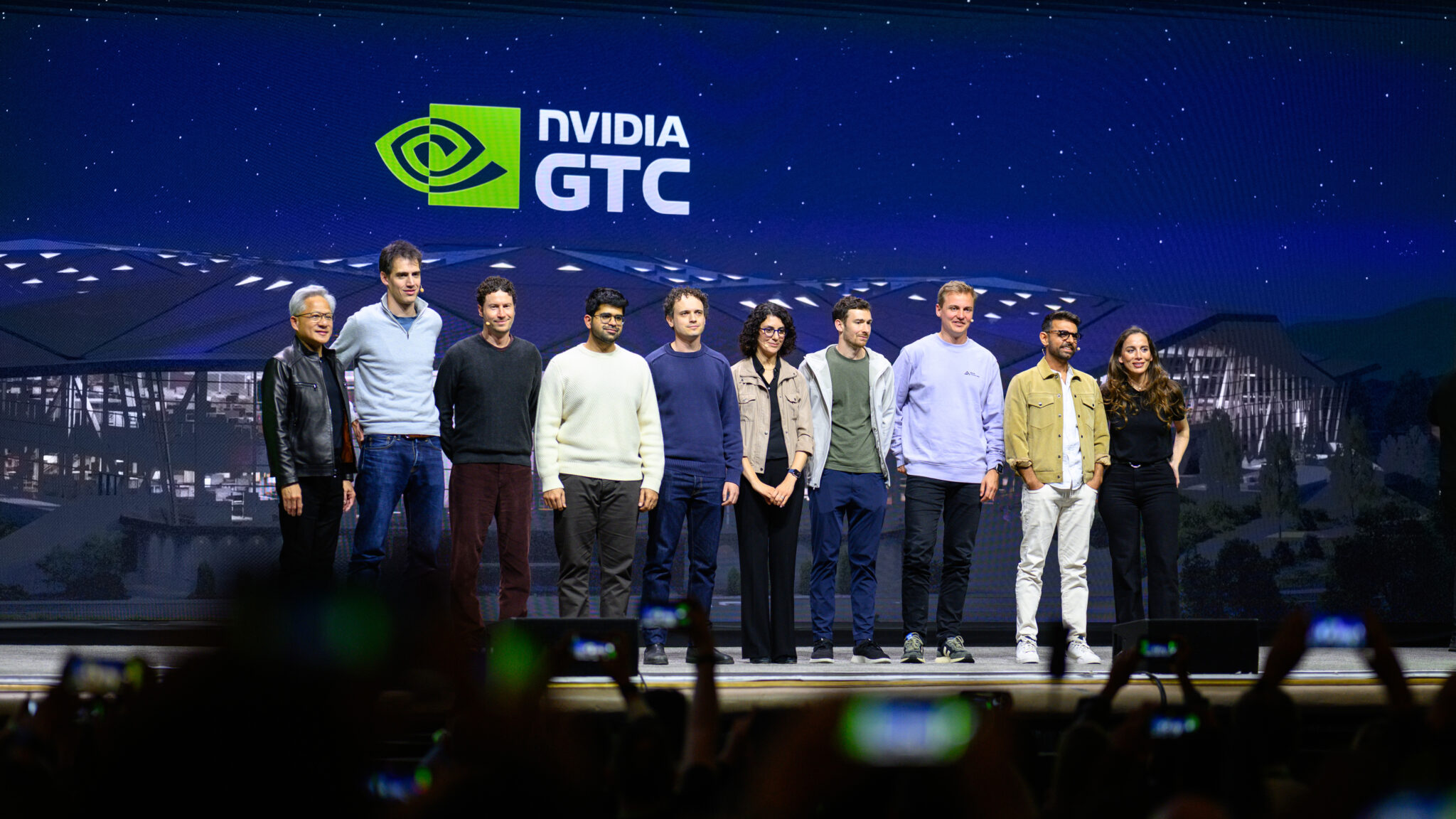

Artificial intelligence is rapidly evolving from a promising technology to the core infrastructure of businesses worldwide. But the future isn’t about a single, monolithic AI – it’s about a diverse ecosystem of models, both large and small, open and closed, generalist and specialist. This was the central message from NVIDIA founder and CEO Jensen Huang at a recent session on open frontier models at NVIDIA GTC.

Beyond Open vs. Closed: A Hybrid Approach

Huang emphatically stated that the debate isn’t about choosing between open and closed innovation. Instead, it’s about recognizing that both approaches are essential. “Proprietary versus open is not a thing. It’s proprietary and open,” he explained. This signals a shift in thinking, acknowledging the strengths of both models and the necessitate for collaboration.

The Need for Specialized AI Systems

Every industry faces unique challenges. Healthcare, finance, and manufacturing all require AI tailored to their specific data and workflows. A one-size-fits-all approach simply won’t operate. The solution? Systems of models, finely tuned and specialized for different tasks, working together to solve complex business problems.

NVIDIA is actively contributing to the open-source AI movement, now being the largest organization on Hugging Face, with nearly 4,000 team members. The company recently launched the NVIDIA Nemotron Coalition, a global collaboration of AI labs focused on advancing open, frontier-level foundation models through shared expertise and resources.

AI Agents: The Future of Work?

A key takeaway from discussions at GTC was the growing capability of AI agents. According to Cursor CEO Michael Truell, “We’re soon going to witness agents really be coworkers that can grab on tasks that take many hours or many days, and do incredibly complex workloads.” This suggests a future where AI handles increasingly sophisticated tasks, freeing up human workers to focus on more strategic initiatives.

Orchestrating the AI Ecosystem

Perplexity CEO Aravind Srinivas envisions a future where AI isn’t about selecting the “best” model, but rather orchestrating a “multimodal, multi-model and multi-cloud orchestra.” The system itself will intelligently delegate tasks to the most appropriate model, simplifying the process for users.

Trust and Accessibility Through Open Systems

Open systems are gaining traction due to their inherent trustworthiness and accessibility. AMP PBC’s Anjney Midha noted, “At the end of the day, you’re delegating trust…and it’s much easier to trust an open system.” This transparency fosters confidence and allows for wider adoption of AI technologies.

The Importance of Both Generalist and Specialist AI

Just as a hospital relies on both general practitioners and specialized surgeons, society needs both generalist and specialist AI. Open foundations combined with proprietary data allow organizations to unlock unique value and drive innovation in both academia and business. Ai2’s Hanna Hajishirzi emphasized that open access accelerates progress and democratizes AI, ensuring broader participation and benefit.

Black Forest Labs’ Robin Rombach added that both frontier models and specialized open models have exciting potential, and that all of them should have some open component.

FAQ

Q: What is the NVIDIA Nemotron Coalition?

A: It’s a global collaboration of AI labs working to advance open, frontier-level foundation models through shared expertise, data, and compute.

Q: What is the key message from Jensen Huang regarding open vs. Proprietary AI?

A: It’s not an either/or situation. Both open and proprietary AI are essential and should coexist.

Q: What role will AI agents play in the future?

A: They are expected to develop into highly capable coworkers, handling complex tasks and workloads.

Q: Why is specialization important in AI?

A: Different industries have unique challenges that require tailored AI solutions.

Watch the GTC session highlights on YouTube and start building with NVIDIA Nemotron open models.