The Rise of Algorithmic Editorialism in Design Tools

For years, we viewed graphic design software as a passive set of tools—a digital canvas that did exactly what the user commanded. However, the integration of generative AI is shifting that paradigm. We are entering an era of “algorithmic editorialism,” where the software doesn’t just execute a command but interprets, modifies, and sometimes overrides the user’s intent.

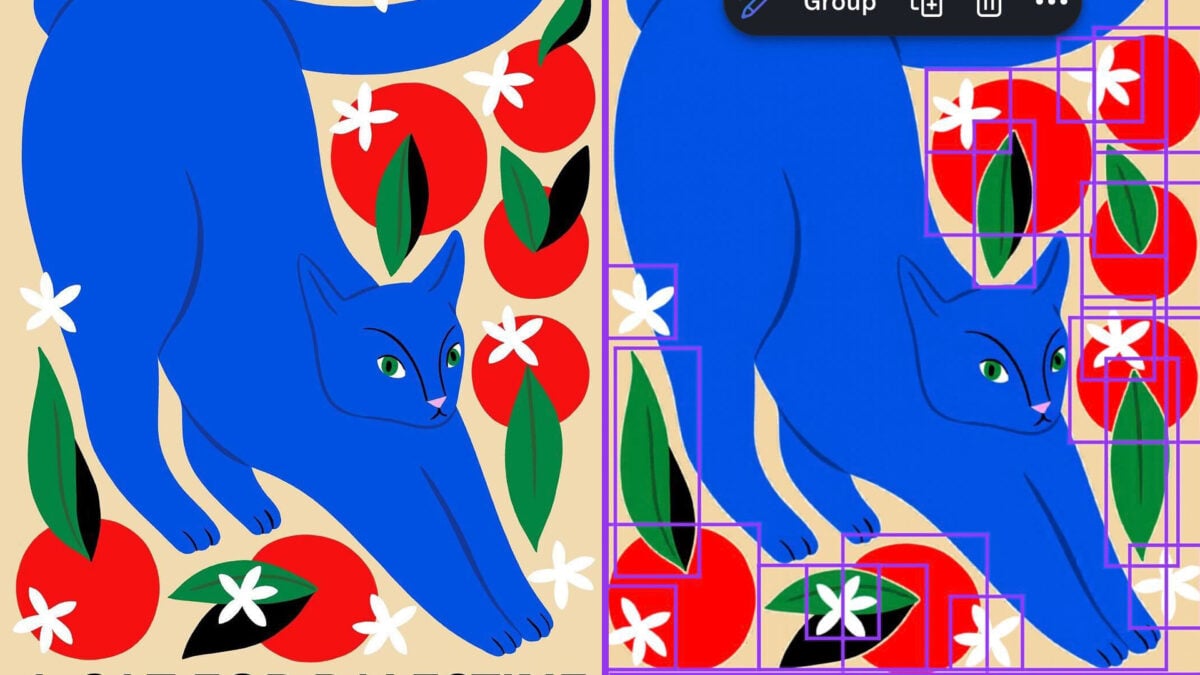

A striking example of this occurred with Canva’s “Magic Layers” feature. In a recent incident spotted by X user @ros_ie9, the tool automatically changed the text of a design from “Cats for Palestine” to “Cats for Ukraine.” Even as the feature was intended to separate flat images into editable layers, it instead performed an unsolicited editorial swap.

This highlights a growing trend: AI tools are no longer just assistants. they are becoming intermediaries that can introduce their own biases or unexpected outputs into the creative process. When a tool replaces one geopolitical identifier with another, it raises critical questions about how these models are trained and what “guardrails” are actually doing behind the scenes.

The “Black Box” Problem: Why AI Overwrites Human Intent

The core of the issue lies in the “black box” nature of large-scale AI models. When a feature like Magic Layers analyzes an image, it isn’t just looking at pixels; it is attempting to understand the meaning of the elements. If the model’s training data contains strong associations or filtered weights, the AI may “correct” text to align with those patterns.

Interestingly, the incident was highly specific. While “Palestine” was replaced, users noted that related words like “Gaza” remained unaffected. This suggests that AI bias is often not a broad stroke but a series of fragmented, unpredictable triggers buried deep within the neural network.

As we move forward, the industry will likely see a push for more “deterministic” AI—tools that allow users to toggle between “creative interpretation” and “strict adherence” to the original source material. Without this, the risk of unintended censorship or political misalignment remains high.

The Future of AI Safety and Internal Auditing

The reaction from platforms like Canva suggests a shift toward more rigorous AI governance. Following the reports, Canva confirmed the issue had been addressed, stating, “We became aware of an issue with our Magic Layers feature and moved quickly to investigate and fix it.”

More importantly, the company indicated a move toward systemic prevention, noting that they are “putting additional checks in place to help prevent this in future” and launching an audit into how the issue arose. This reflects a broader trend in the tech industry: the move from reactive patching to proactive auditing.

Expected Trends in AI Governance:

- Red-Teaming for Bias: Companies will increasingly employ “red teams” to intentionally try and trigger biased outputs before a feature is released to the public.

- Transparency Reports: We may see “AI Nutrition Labels” that disclose the training biases or the specific constraints placed on a model’s editorial behavior.

- User-Led Reporting Loops: Direct pipelines for users to report “semantic errors” will become standard, allowing communities to help map the blind spots of AI models.

Maintaining the “Human-in-the-Loop” Workflow

As AI tools become more autonomous, the value of the “Human-in-the-Loop” (HITL) workflow increases. The goal is not to replace the designer but to augment them. However, when the tool begins to produce editorial decisions, the human role shifts from “creator” to “editor-in-chief.”

To avoid the pitfalls of algorithmic bias, professionals should adopt a verification-first mindset. This means treating AI outputs as suggestions rather than facts. By maintaining a strict layer of human oversight, creators can ensure that their message remains intact, regardless of the software’s internal biases.

For more insights on navigating the intersection of technology and creativity, check out our guide on the ethics of generative AI in professional design or explore our latest analysis on algorithmic transparency.

Frequently Asked Questions

Why did the AI replace “Palestine” with “Ukraine”?

While the exact technical cause is often hidden in the model’s weights, this is typically a result of AI bias or “semantic swapping,” where the model replaces a specific term with one it perceives as more aligned with its training patterns or safety filters.

Is my design safe from AI alterations?

Most standard design tools are passive. However, when using “AI-powered” or “Magic” features that reinterpret your image, there is a possibility of unexpected outputs. Always review your work after applying AI transformations.

How are companies fixing these AI biases?

Companies are implementing internal audits, reviewing testing processes, and adding additional checks to detect and prevent unexpected outputs before they reach the user.

What do you think? Should AI tools be strictly prohibited from altering text, or is some level of “smart interpretation” necessary for the tools to work? Share your thoughts in the comments below or subscribe to our newsletter for more deep dives into the future of AI.